1.安裝pyinstaller

2.安裝pywin32

3.安裝其他模塊

注意點:

scrapy用pyinstaller打包不能用

|

1

|

cmdline.execute('scrapy crawl douban -o test.csv --nolog'.split()) |

我用的是CrawlerProcess方式來輸出

舉個栗子:

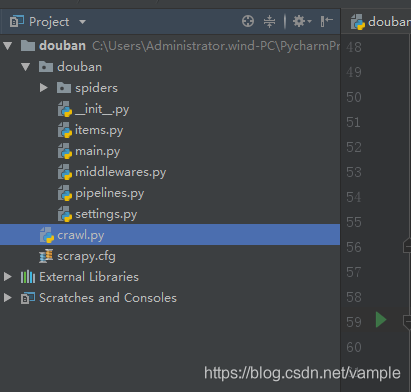

1、在scrapy項目根目錄下建一個crawl.py(你可以自己定義)如下圖

cralw.py代碼如下

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

|

# -*- coding: utf-8 -*-from scrapy.crawler import CrawlerProcessfrom scrapy.utils.project import get_project_settingsfrom douban.spiders.douban_spider import Douban_spider#打包需要的importimport urllib.robotparserimport scrapy.spiderloaderimport scrapy.statscollectorsimport scrapy.logformatterimport scrapy.dupefiltersimport scrapy.squeuesimport scrapy.extensions.spiderstateimport scrapy.extensions.corestatsimport scrapy.extensions.telnetimport scrapy.extensions.logstatsimport scrapy.extensions.memusageimport scrapy.extensions.memdebugimport scrapy.extensions.feedexportimport scrapy.extensions.closespiderimport scrapy.extensions.debugimport scrapy.extensions.httpcacheimport scrapy.extensions.statsmailerimport scrapy.extensions.throttleimport scrapy.core.schedulerimport scrapy.core.engineimport scrapy.core.scraperimport scrapy.core.spidermwimport scrapy.core.downloaderimport scrapy.downloadermiddlewares.statsimport scrapy.downloadermiddlewares.httpcacheimport scrapy.downloadermiddlewares.cookiesimport scrapy.downloadermiddlewares.useragentimport scrapy.downloadermiddlewares.httpproxyimport scrapy.downloadermiddlewares.ajaxcrawlimport scrapy.downloadermiddlewares.chunkedimport scrapy.downloadermiddlewares.decompressionimport scrapy.downloadermiddlewares.defaultheadersimport scrapy.downloadermiddlewares.downloadtimeoutimport scrapy.downloadermiddlewares.httpauthimport scrapy.downloadermiddlewares.httpcompressionimport scrapy.downloadermiddlewares.redirectimport scrapy.downloadermiddlewares.retryimport scrapy.downloadermiddlewares.robotstxtimport scrapy.spidermiddlewares.depthimport scrapy.spidermiddlewares.httperrorimport scrapy.spidermiddlewares.offsiteimport scrapy.spidermiddlewares.refererimport scrapy.spidermiddlewares.urllengthimport scrapy.pipelinesimport scrapy.core.downloader.handlers.httpimport scrapy.core.downloader.contextfactoryfrom douban.pipelines import DoubanPipelinefrom douban.items import DoubanItemimport douban.settingsif __name__ == '__main__': setting = get_project_settings() process = CrawlerProcess(settings=setting) process.crawl(Douban_spider) process.start() |

2、在crawl.py目錄下pyinstaller crawl.py 生成dist,build(可刪)和crawl.spec(可刪)。

3、在crawl.exe目錄下創建文件夾scrapy,然后到自己安裝的scrapy文件夾中把VERSION和mime.types兩個文件復制到剛才創建的scrapy文件夾中。

4、發布程序 包括douban/dist 和douban/scrapy.cfg

如果沒有scrapy.cfg無法讀取settings.py和pipelines.py的配置

5、在另外一臺機器上測試成功

6、對于自定義的pipelines和settings,貌似用pyinstaller打包后的 exe無法讀取到settings和pipelines,哪位高手看看能解決這個問題???

到此這篇關于Pyinstaller打包Scrapy項目的實現步驟的文章就介紹到這了,更多相關Pyinstaller打包Scrapy內容請搜索服務器之家以前的文章或繼續瀏覽下面的相關文章希望大家以后多多支持服務器之家!

原文鏈接:https://blog.csdn.net/vample/article/details/86224021