Keras應該是最簡單的一種深度學習框架了,入門非常的簡單.

簡單記錄一下keras實現多種分類網絡:如AlexNet、Vgg、ResNet

采用kaggle貓狗大戰的數據作為數據集.

由于AlexNet采用的是LRN標準化,Keras沒有內置函數實現,這里用batchNormalization代替

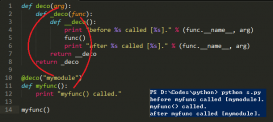

收件建立一個model.py的文件,里面存放著alexnet,vgg兩種模型,直接導入就可以了

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

|

#coding=utf-8from keras.models import Sequentialfrom keras.layers import Dense, Dropout, Activation, Flattenfrom keras.layers import Conv2D, MaxPooling2D, ZeroPadding2D, BatchNormalizationfrom keras.layers import *from keras.layers.advanced_activations import LeakyReLU,PReLUfrom keras.models import Model def keras_batchnormalization_relu(layer): BN = BatchNormalization()(layer) ac = PReLU()(BN) return ac def AlexNet(resize=227, classes=2): model = Sequential() # 第一段 model.add(Conv2D(filters=96, kernel_size=(11, 11), strides=(4, 4), padding='valid', input_shape=(resize, resize, 3), activation='relu')) model.add(BatchNormalization()) model.add(MaxPooling2D(pool_size=(3, 3), strides=(2, 2), padding='valid')) # 第二段 model.add(Conv2D(filters=256, kernel_size=(5, 5), strides=(1, 1), padding='same', activation='relu')) model.add(BatchNormalization()) model.add(MaxPooling2D(pool_size=(3, 3), strides=(2, 2), padding='valid')) # 第三段 model.add(Conv2D(filters=384, kernel_size=(3, 3), strides=(1, 1), padding='same', activation='relu')) model.add(Conv2D(filters=384, kernel_size=(3, 3), strides=(1, 1), padding='same', activation='relu')) model.add(Conv2D(filters=256, kernel_size=(3, 3), strides=(1, 1), padding='same', activation='relu')) model.add(MaxPooling2D(pool_size=(3, 3), strides=(2, 2), padding='valid')) # 第四段 model.add(Flatten()) model.add(Dense(4096, activation='relu')) model.add(Dropout(0.5)) model.add(Dense(4096, activation='relu')) model.add(Dropout(0.5)) model.add(Dense(1000, activation='relu')) model.add(Dropout(0.5)) # Output Layer model.add(Dense(classes,activation='softmax')) # model.add(Activation('softmax')) return model def AlexNet2(inputs, classes=2, prob=0.5): ''' 自己寫的函數,嘗試keras另外一種寫法 :param inputs: 輸入 :param classes: 類別的個數 :param prob: dropout的概率 :return: 模型 ''' # Conv2D(32, (3, 3), dilation_rate=(2, 2), padding='same')(inputs) print "input shape:", inputs.shape conv1 = Conv2D(filters=96, kernel_size=(11, 11), strides=(4, 4), padding='valid')(inputs) conv1 = keras_batchnormalization_relu(conv1) print "conv1 shape:", conv1.shape pool1 = MaxPool2D(pool_size=(3, 3), strides=(2, 2))(conv1) print "pool1 shape:", pool1.shape conv2 = Conv2D(filters=256, kernel_size=(5, 5), padding='same')(pool1) conv2 = keras_batchnormalization_relu(conv2) print "conv2 shape:", conv2.shape pool2 = MaxPool2D(pool_size=(3, 3), strides=(2, 2))(conv2) print "pool2 shape:", pool2.shape conv3 = Conv2D(filters=384, kernel_size=(3, 3), padding='same')(pool2) conv3 = PReLU()(conv3) print "conv3 shape:", conv3.shape conv4 = Conv2D(filters=384, kernel_size=(3, 3), padding='same')(conv3) conv4 = PReLU()(conv4) print "conv4 shape:", conv4 conv5 = Conv2D(filters=256, kernel_size=(3, 3), padding='same')(conv4) conv5 = PReLU()(conv5) print "conv5 shape:", conv5 pool3 = MaxPool2D(pool_size=(3, 3), strides=(2, 2))(conv5) print "pool3 shape:", pool3.shape dense1 = Flatten()(pool3) dense1 = Dense(4096, activation='relu')(dense1) print "dense2 shape:", dense1 dense1 = Dropout(prob)(dense1) # print "dense1 shape:", dense1 dense2 = Dense(4096, activation='relu')(dense1) print "dense2 shape:", dense2 dense2 = Dropout(prob)(dense2) # print "dense2 shape:", dense2 predict= Dense(classes, activation='softmax')(dense2) model = Model(inputs=inputs, outputs=predict) return model def vgg13(resize=224, classes=2, prob=0.5): model = Sequential() model.add(Conv2D(64, (3, 3), strides=(1, 1), input_shape=(resize, resize, 3), padding='same', activation='relu', kernel_initializer='uniform')) model.add(Conv2D(64, (3, 3), strides=(1, 1), padding='same', activation='relu', kernel_initializer='uniform')) model.add(MaxPooling2D(pool_size=(2, 2))) model.add(Conv2D(128, (3, 2), strides=(1, 1), padding='same', activation='relu', kernel_initializer='uniform')) model.add(Conv2D(128, (3, 3), strides=(1, 1), padding='same', activation='relu', kernel_initializer='uniform')) model.add(MaxPooling2D(pool_size=(2, 2))) model.add(Conv2D(256, (3, 3), strides=(1, 1), padding='same', activation='relu', kernel_initializer='uniform')) model.add(Conv2D(256, (3, 3), strides=(1, 1), padding='same', activation='relu', kernel_initializer='uniform')) model.add(MaxPooling2D(pool_size=(2, 2))) model.add(Conv2D(512, (3, 3), strides=(1, 1), padding='same', activation='relu', kernel_initializer='uniform')) model.add(Conv2D(512, (3, 3), strides=(1, 1), padding='same', activation='relu', kernel_initializer='uniform')) model.add(MaxPooling2D(pool_size=(2, 2))) model.add(Conv2D(512, (3, 3), strides=(1, 1), padding='same', activation='relu', kernel_initializer='uniform')) model.add(Conv2D(512, (3, 3), strides=(1, 1), padding='same', activation='relu', kernel_initializer='uniform')) model.add(MaxPooling2D(pool_size=(2, 2))) model.add(Flatten()) model.add(Dense(4096, activation='relu')) model.add(Dropout(prob)) model.add(Dense(4096, activation='relu')) model.add(Dropout(prob)) model.add(Dense(classes, activation='softmax')) return model def vgg16(resize=224, classes=2, prob=0.5): model = Sequential() model.add(Conv2D(64, (3, 3), strides=(1, 1), input_shape=(resize, resize, 3), padding='same', activation='relu', kernel_initializer='uniform')) model.add(Conv2D(64, (3, 3), strides=(1, 1), padding='same', activation='relu', kernel_initializer='uniform')) model.add(MaxPooling2D(pool_size=(2, 2))) model.add(Conv2D(128, (3, 2), strides=(1, 1), padding='same', activation='relu', kernel_initializer='uniform')) model.add(Conv2D(128, (3, 3), strides=(1, 1), padding='same', activation='relu', kernel_initializer='uniform')) model.add(MaxPooling2D(pool_size=(2, 2))) model.add(Conv2D(256, (3, 3), strides=(1, 1), padding='same', activation='relu', kernel_initializer='uniform')) model.add(Conv2D(256, (3, 3), strides=(1, 1), padding='same', activation='relu', kernel_initializer='uniform')) model.add(Conv2D(256, (3, 3), strides=(1, 1), padding='same', activation='relu', kernel_initializer='uniform')) model.add(MaxPooling2D(pool_size=(2, 2))) model.add(Conv2D(512, (3, 3), strides=(1, 1), padding='same', activation='relu', kernel_initializer='uniform')) model.add(Conv2D(512, (3, 3), strides=(1, 1), padding='same', activation='relu', kernel_initializer='uniform')) model.add(Conv2D(512, (3, 3), strides=(1, 1), padding='same', activation='relu', kernel_initializer='uniform')) model.add(MaxPooling2D(pool_size=(2, 2))) model.add(Conv2D(512, (3, 3), strides=(1, 1), padding='same', activation='relu', kernel_initializer='uniform')) model.add(Conv2D(512, (3, 3), strides=(1, 1), padding='same', activation='relu', kernel_initializer='uniform')) model.add(Conv2D(512, (3, 3), strides=(1, 1), padding='same', activation='relu', kernel_initializer='uniform')) model.add(MaxPooling2D(pool_size=(2, 2))) model.add(Flatten()) model.add(Dense(4096, activation='relu')) model.add(Dropout(prob)) model.add(Dense(4096, activation='relu')) model.add(Dropout(prob)) model.add(Dense(classes, activation='softmax')) return model |

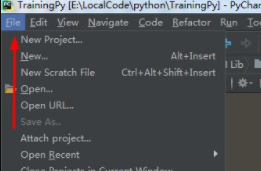

然后建立一個train.py文件,用于讀取數據和訓練數據的.

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

|

#coding=utf-8import kerasimport cv2import osimport numpy as npimport modelimport modelResNetimport tensorflow as tffrom keras.layers import Input, Densefrom keras.preprocessing.image import ImageDataGenerator resize = 224batch_size = 128path = "/home/hjxu/PycharmProjects/01_cats_vs_dogs/data" trainDirectory = '/home/hjxu/PycharmProjects/01_cats_vs_dogs/data/train/'def load_data(): imgs = os.listdir(path + "/train/") num = len(imgs) train_data = np.empty((5000, resize, resize, 3), dtype="int32") train_label = np.empty((5000, ), dtype="int32") test_data = np.empty((5000, resize, resize, 3), dtype="int32") test_label = np.empty((5000, ), dtype="int32") for i in range(5000): if i % 2: train_data[i] = cv2.resize(cv2.imread(path + '/train/' + 'dog.' + str(i) + '.jpg'), (resize, resize)) train_label[i] = 1 else: train_data[i] = cv2.resize(cv2.imread(path + '/train/' + 'cat.' + str(i) + '.jpg'), (resize, resize)) train_label[i] = 0 for i in range(5000, 10000): if i % 2: test_data[i-5000] = cv2.resize(cv2.imread(path + '/train/' + 'dog.' + str(i) + '.jpg'), (resize, resize)) test_label[i-5000] = 1 else: test_data[i-5000] = cv2.resize(cv2.imread(path + '/train/' + 'cat.' + str(i) + '.jpg'), (resize, resize)) test_label[i-5000] = 0 return train_data, train_label, test_data, test_label def main(): train_data, train_label, test_data, test_label = load_data() train_data, test_data = train_data.astype('float32'), test_data.astype('float32') train_data, test_data = train_data/255, test_data/255 train_label = keras.utils.to_categorical(train_label, 2) ''' #one_hot轉碼,如果使用 categorical_crossentropy,就需要用到to_categorical函數完成轉碼 ''' test_label = keras.utils.to_categorical(test_label, 2) inputs = Input(shape=(224, 224, 3)) modelAlex = model.AlexNet2(inputs, classes=2) ''' 導入模型 ''' modelAlex.compile(loss='categorical_crossentropy', optimizer='sgd', metrics=['accuracy']) ''' def compile(self, optimizer, loss, metrics=None, loss_weights=None, sample_weight_mode=None, **kwargs): optimizer:優化器,為預定義優化器名或優化器對象,參考優化器 loss: 損失函數,為預定義損失函數名或者一個目標函數 metrics:列表,包含評估模型在訓練和測試時的性能指標,典型用法是 metrics=['accuracy'] sample_weight_mode:如果需要按時間步為樣本賦值,需要將改制設置為"temoral" 如果想用自定義的性能評估函數:如下 def mean_pred(y_true, y_pred): return k.mean(y_pred) model.compile(loss = 'binary_crossentropy', metrics=['accuracy', mean_pred],...) 損失函數同理,再看 keras內置支持的損失函數有 mean_squared_error mean_absolute_error mean_absolute_percentage_error mean_squared_logarithmic_error squared_hinge hinge categorical_hinge logcosh categorical_crossentropy sparse_categorical_crossentropy binary_crossentropy kullback_leibler_divergence poisson cosine_proximity ''' modelAlex.summary() ''' # 打印模型信息 ''' modelAlex.fit(train_data, train_label, batch_size=batch_size, epochs=50, validation_split=0.2, shuffle=True) ''' def fit(self, x=None, # x:輸入數據 y=None, # y:標簽 Numpy array batch_size=32, # batch_size:訓練時,一個batch的樣本會被計算一次梯度下降 epochs=1, # epochs: 訓練的輪數,每個epoch會把訓練集循環一遍 verbose=1, # 日志顯示:0表示不在標準輸入輸出流輸出,1表示輸出進度條,2表示每個epoch輸出 callbacks=None, # 回調函數 validation_split=0., # 0-1的浮點數,用來指定訓練集一定比例作為驗證集,驗證集不參與訓練 validation_data=None, # (x,y)的tuple,是指定的驗證集 shuffle=True, # 如果是"batch",則是用來處理HDF5數據的特殊情況,將在batch內部將數據打亂 class_weight=None, # 字典,將不同的類別映射為不同的權值,用來在訓練過程中調整損失函數的 sample_weight=None, # 權值的numpy array,用于訓練的時候調整損失函數 initial_epoch=0, # 該參數用于從指定的epoch開始訓練,繼續之前的訓練 **kwargs): 返回:返回一個History的對象,其中History.history損失函數和其他指標的數值隨epoch變化的情況 ''' scores = modelAlex.evaluate(train_data, train_label, verbose=1) print(scores) scores = modelAlex.evaluate(test_data, test_label, verbose=1) print(scores) modelAlex.save('my_model_weights2.h5') def main2(): train_datagen = ImageDataGenerator(rescale=1. / 255, shear_range=0.2, zoom_range=0.2, horizontal_flip=True) test_datagen = ImageDataGenerator(rescale=1. / 255) train_generator = train_datagen.flow_from_directory(trainDirectory, target_size=(224, 224), batch_size=32, class_mode='binary') validation_generator = test_datagen.flow_from_directory(trainDirectory, target_size=(224, 224), batch_size=32, class_mode='binary') inputs = Input(shape=(224, 224, 3)) # modelAlex = model.AlexNet2(inputs, classes=2) modelAlex = model.vgg13(resize=224, classes=2, prob=0.5) # modelAlex = modelResNet.ResNet50(shape=224, classes=2) modelAlex.compile(loss='sparse_categorical_crossentropy', optimizer='sgd', metrics=['accuracy']) modelAlex.summary() modelAlex.fit_generator(train_generator, steps_per_epoch=1000, epochs=60, validation_data=validation_generator, validation_steps=200) modelAlex.save('model32.hdf5') #if __name__ == "__main__": ''' 如果數據是按照貓狗大戰的數據,都在同一個文件夾下,使用main()函數 如果數據按照貓和狗分成兩類,則使用main2()函數 ''' main2() |

得到模型后該怎么測試一張圖像呢?

建立一個testOneImg.py腳本,代碼如下

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

|

#coding=utf-8from keras.preprocessing.image import load_img#load_image作用是載入圖片from keras.preprocessing.image import img_to_arrayfrom keras.applications.vgg16 import preprocess_inputfrom keras.applications.vgg16 import decode_predictionsimport numpy as npimport cv2import modelfrom keras.models import Sequential pats = '/home/hjxu/tf_study/catVsDogsWithKeras/my_model_weights.h5'modelAlex = model.AlexNet(resize=224, classes=2)# AlexModel = model.AlexNet(weightPath='/home/hjxu/tf_study/catVsDogsWithKeras/my_model_weights.h5') modelAlex.load_weights(pats)#img = cv2.imread('/home/hjxu/tf_study/catVsDogsWithKeras/111.jpg')img = cv2.resize(img, (224, 224))x = img_to_array(img/255) # 三維(224,224,3) x = np.expand_dims(x, axis=0) # 四維(1,224,224,3)#因為keras要求的維度是這樣的,所以要增加一個維度# x = preprocess_input(x) # 預處理print(x.shape)y_pred = modelAlex.predict(x) # 預測概率 t1 = time.time() print("測試圖:", decode_predictions(y_pred)) # 輸出五個最高概率(類名, 語義概念, 預測概率)print y_pred |

不得不說,Keras真心簡單方便。

補充知識:keras中的函數式API——殘差連接+權重共享的理解

1、殘差連接

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

|

# coding: utf-8"""殘差連接 residual connection: 是一種常見的類圖網絡結構,解決了所有大規模深度學習的兩個共性問題: 1、梯度消失 2、表示瓶頸 (甚至,向任何>10層的神經網絡添加殘差連接,都可能會有幫助) 殘差連接:讓前面某層的輸出作為后面某層的輸入,從而在序列網絡中有效地創造一條捷徑。 """from keras import layersx = ...y = layers.Conv2D(128, 3, activation='relu', padding='same')(x)y = layers.Conv2D(128, 3, activation='relu', padding='same')(y)y = layers.Conv2D(128, 3, activation='relu', padding='same')(y)y = layers.add([y, x]) # 將原始x與輸出特征相加# ---------------------如果特征圖尺寸不同,采用線性殘差連接-------------------x = ...y = layers.Conv2D(128, 3, activation='relu', padding='same')(x)y = layers.Conv2D(128, 3, activation='relu', padding='same')(y)y = layers.MaxPooling2D(2, strides=2)(y)residual = layers.Conv2D(128, 1, strides=2, padding='same')(x) # 使用1*1的卷積,將原始張量線性下采樣為y具有相同的形狀y = layers.add([y, residual]) # 將原始x與輸出特征相加 |

2、權重共享

即多次調用同一個實例

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

|

# coding: utf-8"""函數式子API:權重共享 能夠重復的使用同一個實例,這樣相當于重復使用一個層的權重,不需要重新編寫"""from keras import layersfrom keras import Inputfrom keras.models import Modellstm = layers.LSTM(32) # 實例化一個LSTM層,后面被調用很多次# ------------------------左邊分支--------------------------------left_input = Input(shape=(None, 128))left_output = lstm(left_input) # 調用lstm實例# ------------------------右分支---------------------------------right_input = Input(shape=(None, 128))right_output = lstm(right_input) # 調用lstm實例# ------------------------將層進行連接合并------------------------merged = layers.concatenate([left_output, right_output], axis=-1)# -----------------------在上面構建一個分類器---------------------predictions = layers.Dense(1, activation='sigmoid')(merged)# -------------------------構建模型,并擬合訓練-----------------------------------model = Model([left_input, right_input], predictions)model.fit([left_data, right_data], targets) |

以上這篇keras實現多種分類網絡的方式就是小編分享給大家的全部內容了,希望能給大家一個參考,也希望大家多多支持服務器之家。

原文鏈接:https://blog.csdn.net/hjxu2016/article/details/83504756